How to Prepare Your Data for AI Features

Your AI roadmap is slipping. The sprint reviews keep pushing the same features to next quarter. The demo worked beautifully, but production is another story. And when you dig into why, the answer is almost never the model.

According to the Stanford Enterprise AI Playbook, 77% of the hardest challenges in AI implementation are invisible costs: data quality, process redesign, and change management. Technology was consistently rated the easiest part. A separate MIT NANDA study found that 95% of generative AI pilot programs fail to produce measurable financial impact, not because of model quality, but because of poor workflow integration and misaligned data foundations.

The real bottleneck is almost always the data layer sitting underneath the feature you're trying to ship.

This post breaks down what "AI-ready data" actually means for product and engineering teams, where most teams get stuck, and a practical checklist to diagnose your own gaps before they derail the next sprint.

AI-Ready Data Is Not What You Think It Is

Most teams assume "AI-ready" means clean data. Structured tables, no nulls, consistent formats. That's the traditional data management standard, and it's the wrong bar for AI.

Traditional data preparation optimizes for human readability: tidy dashboards, standardized reports, minimal variation. AI models need something different. They need data that reflects the messy, real-world conditions they'll encounter in production. Outliers, edge cases, contextual nuance. A fraud detection model trained only on clean, idealized transaction data will fail the moment it hits actual customer behavior.

AI-ready data is fit-for-purpose, not universally clean. What makes data AI-ready depends entirely on the use case.

There's a second dimension most teams miss: AI-ready data is not a one-time preparation task. It's a continuous process. As your AI models evolve, their data requirements shift. The governance layer underneath needs to track that drift and adapt. As we've written before, data requirements will constantly shift based on the specific use case and type of AI involved - which means your data pipeline needs to be built for ongoing iteration, not a single launch.

The Four Bottlenecks That Kill AI Roadmaps

After working with SaaS teams across industries, we see the same four failure patterns repeat. Each one looks like a different problem on the surface. Underneath, they all trace back to the data layer.

1. Fragmented Context Across Too Many Tools

This is the one nobody talks about until it's too late. Your business definitions live in Confluence. Technical metadata sits in a data catalog. Lineage is tracked somewhere else, if at all. Semantic definitions are buried in BI tools or dbt. Governance policies are in a GRC system.

When your AI feature needs to understand what "revenue" means in your product, it can't reconcile three conflicting definitions across eight systems. It hallucinates. The feature breaks. The sprint slips.

Gartner's 2026 Data and Analytics Summit found that the average enterprise context lives across 8 to 12 disconnected tools. That fragmentation isn't just inconvenient. It's the primary reason AI agents fail in production.

2. Data Quality Gaps That Only Surface in Production

Data quality issues account for 21% of AI project delays, according to the Stanford Enterprise AI Playbook. But the painful part is timing: these gaps almost never surface during the POC. They show up in production, when edge cases and real user behavior hit the model. By then, the feature is already promised on the roadmap.

The fix isn't a one-time data audit. It's continuous qualification: ongoing checks that verify your data still matches the conditions your model was trained on.

3. No Semantic Layer

The model doesn't know what your data means. It knows what it says. If "ARR" means three different things in three different tables, the model will pick one at random. A semantic layer translates raw data into business-meaningful context the model can reason about reliably.

4. Governance That Wasn't Designed for AI

Traditional governance was built for compliance: who can see what, and when. AI governance is different. It needs to manage the full model lifecycle: what data trained the model, how outputs are validated, how drift is detected, and how regulatory requirements (GDPR, HIPAA, SOC 2) are enforced at inference time.

Teams that skip this step don't discover the gap until a compliance review or a model output causes a customer incident.

The AI-Ready Data Checklist

Use this before you scope the next AI feature. If you can't check every box, you've found your bottleneck.

Context and Semantics

Every key business metric has a single, agreed-upon definition stored in one place

Business definitions, technical metadata, and governance policies live in a unified layer (not 8 separate tools)

Your AI model can query that context in real time, not just at training time

Data Quality

Data quality checks run continuously, not just at ingestion

Your pipeline surfaces edge cases and outliers rather than filtering them out

You have a process for detecting when production data drifts from training conditions

Governance and Lineage

You can trace every data point from source to model output

Access controls are enforced at inference time, not just at the database level

Your compliance requirements (GDPR, HIPAA, SOC 2) are built into the AI lifecycle, not bolted on after launch

Pipeline Architecture

Your data connectors cover all the sources the AI feature needs (CRM, product events, financials, etc.)

The pipeline is built for iteration, not a one-time load

You can add a new data source without rebuilding the semantic layer from scratch

Key insight: If you're failing on the semantics and governance rows, no amount of model tuning will fix your feature. The model is only as reliable as the context it can access.

Most teams discover these gaps after the POC fails to scale - when the pressure to ship is highest and the cost of fixing infrastructure is steepest. Running this checklist before you start building is the single highest-leverage thing you can do to protect your roadmap.

What Fixing This Actually Looks Like

The teams that ship AI features on schedule share one thing in common: they treated data infrastructure as a product requirement, not an afterthought.

That means building a unified data foundation. It means having a semantic layer that gives every AI feature a consistent, governed view of your business data. And it means continuous quality checks that catch drift before it becomes a production incident.

At DataGOL, we've seen this play out across SaaS teams in healthcare, fintech, e-gaming, and vertical AI. The pattern is consistent: teams that invest in the data layer first ship AI features in 8 to 10 weeks. Teams that skip it spend 9 months debugging hallucinations and compliance gaps.

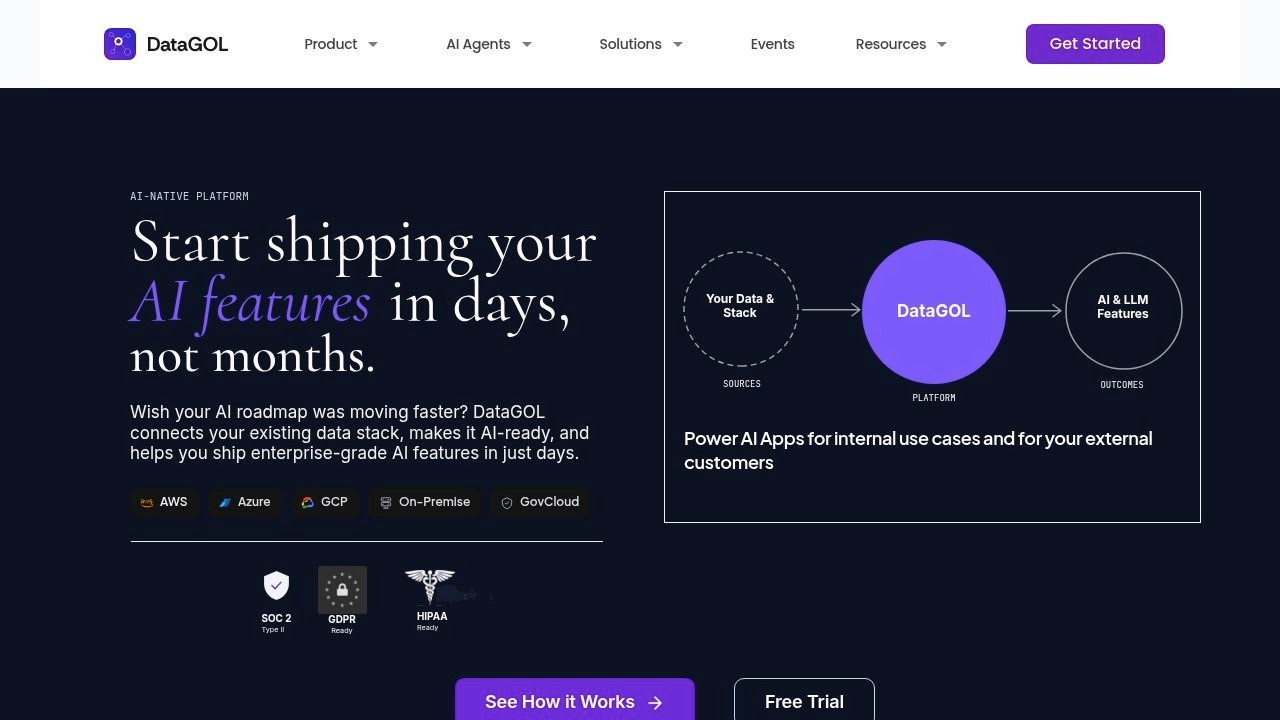

Our platform unifies data ingestion, semantic modeling, governance, and AI feature deployment into a single environment. No stitching together 8 tools. No rebuilding context from scratch for every new feature. If you want to see what your own data looks like running on that foundation, we run a 2-Week Proof of Value where you bring your actual data and we ship an AI feature end-to-end.

For a deeper look at what production-grade AI architecture requires once the data foundation is in place, read our field report on the 3 control systems that hold up in production.

Author

Vinod SP

Seasoned Data and Product leader with over 20 years of experience in launching and scaling global products for enterprises and SaaS start-ups. With a strong focus on Data Intelligence and Customer Experience platforms, driving innovation and growth in complex, high-impact environments