How to Ship AI Features Faster with DataGOL

You have good engineers. You have a model. You have a roadmap full of AI features your users actually want. So why is it taking months to ship anything?

The honest answer is rarely the model. It is the agent harness - the connectors, pipelines, schemas, catalogs, and governance wiring that have to work reliably before any agent can do anything useful. Most teams underestimate how much time gets lost building and maintaining this infrastructure before a single line of feature code gets written. Integration work. Schema cleanup. Pipeline orchestration. Governance sign-off. Metadata alignment. By the time your team is ready to build the actual feature, weeks have already disappeared into the plumbing.

This is the pattern we see over and over at SaaS companies trying to move fast on AI. The bottleneck is not talent or ambition. It is the fragmented stack sitting between raw data and production-ready AI features.

The typical pre-feature checklist most teams underestimate:

Wiring data sources through separate ingestion and ETL tools

Cleaning and enriching data before it is safe to use in a model

Building and maintaining a catalog so teams can find and trust the right data

Setting up governance and lineage to satisfy compliance and reliability requirements

Connecting the prepared data to an AI app layer or analytics surface

Keeping all of the above synchronized when upstream schemas change

Each step is manageable in isolation. Together, they routinely push AI feature timelines from weeks into months before a single line of model code gets written. DataGOL cuts data complexity by 90% by collapsing these steps into one system, so teams spend their sprints shipping features, not maintaining pipelines.

What Actually Slows AI Feature Shipping

Most engineering leaders assume the hard part is the model. It is not. The model is a solved problem. The hard part is the agent harness - the connectors, pipelines, schemas, catalogs, and governance wiring that have to work reliably before any agent can do anything useful. That is where the delays accumulate, and that is where most AI roadmaps stall.

Here are the four bottlenecks that consistently push AI launch timelines from weeks to months:

Connectors and Integration. Every new data source requires custom connectors, transformation logic, and ongoing maintenance. When sources number in the dozens, this becomes a full-time job before any AI work starts.

Changing Schema . Source systems change independently of your data pipelines. Without automatic schema change detection, a single upstream change can silently break downstream models and features, and teams only find out when something fails in production.

Weak data observability. When engineers cannot see the health, freshness, and lineage of their data in real time, they spend significant time debugging rather than building. Trust in the data is low, so every new feature requires manual validation before it can ship.

Fragmented governance. Compliance and access controls are managed separately across ingestion, storage, and application layers. This creates duplicated effort and approval bottlenecks that slow down every release cycle.

The real cost of fragmentation: Every tool handoff between data engineering, analytics, and application teams introduces loss of context and for AI features, lost context is not just an inconvenience. It is the difference between a AI application that produces reliable outputs and one that hallucinates, drifts, or silently degrades in production. A pipeline that breaks at the ingestion layer does not just delay a release by days. It corrupts the verified context your agents depend on, and teams often do not find out until something fails in front of a user. The DataGOL data intelligence platform eliminates this by replacing fragmented handoffs with a single control plane where context is preserved end-to-end, from raw source to production agent.

The common thread across all four bottlenecks is that they are infrastructure problems, not model problems. Solving them requires rethinking the system around the model, not just the model itself.

How DataGOL Compresses the Path from Data to Feature

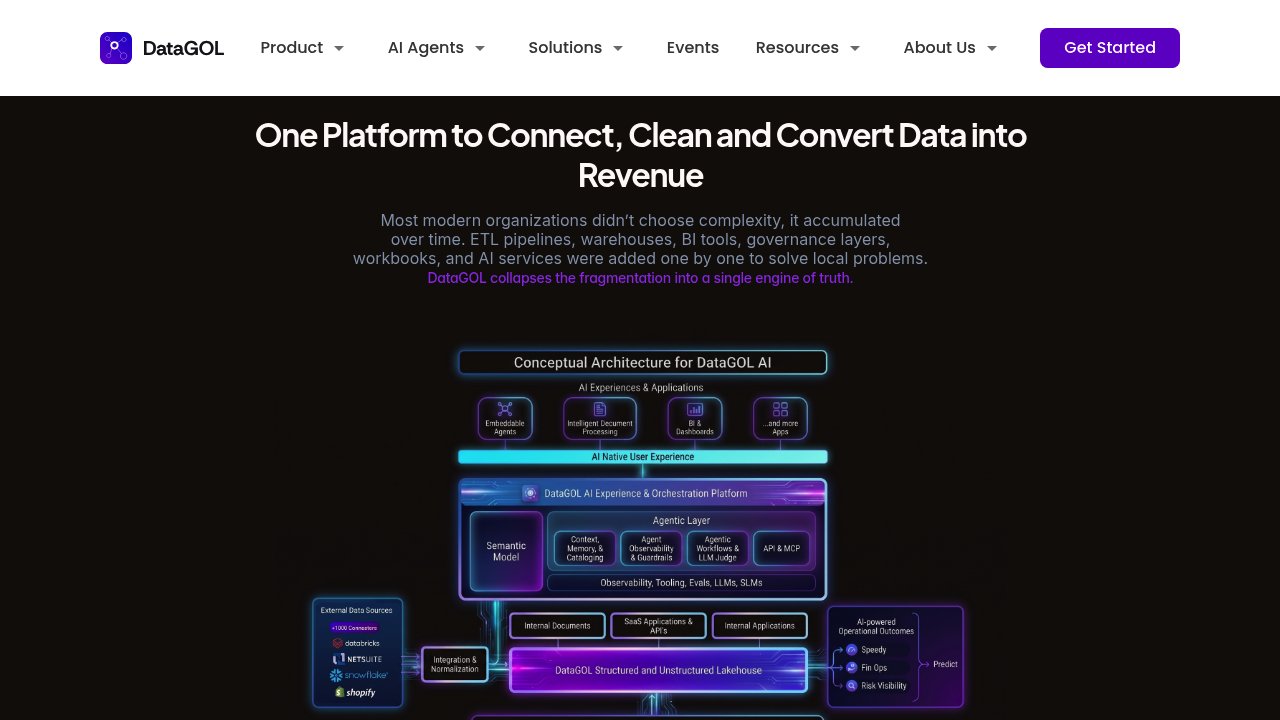

The core idea behind DataGOL is straightforward: instead of stitching together separate tools for ingestion, transformation, cataloging, governance, and AI app development, you do all of it in one system.

Source integration and data preparation that used to take weeks now takes hours. DataGOL customers deploy in weeks, not months, at 50-80% lower infrastructure cost than a fragmented stack.

How the path compresses:

Ingestion happens once. Structured, semi-structured, and unstructured data from hundreds of sources flows into a unified lakehouse without custom engineering for each connection.

Transformation and enrichment are built in. Data is cleaned, enriched, and quality-tiered automatically as it moves through the platform, so it arrives at the AI layer already trusted and ready.

Cataloging is AI-driven. Metadata is generated and maintained automatically, so teams can discover and understand data without manual documentation sprints.

Governance is not a separate layer. Access controls, lineage, and compliance are embedded in the same platform, not bolted on through a separate tool with its own integration overhead.

AI app development starts from prepared data. Because the lakehouse is AI-ready from day one, building features, agents, and analytics surfaces does not require a separate data preparation phase.

Key architecture principle: Ingestion, transformation, and governance happen once, then every agent and application consumes the same verified context. This means a new AI feature does not trigger a new round of data work. The foundation is already there.

The result is a single control plane for the entire data ecosystem. Teams stop stitching tools together and start spending their time on the features users actually see.

Layer by Layer: Where the Speed Gains Come From

Here is what the acceleration looks like at each layer of the stack, mapped to the specific friction it removes and the shipping impact it creates.

Platform Layer | Friction Removed | Shipping Impact |

|---|---|---|

Connectors and Ingestion | Eliminates custom connector builds for each source; handles structured, semi-structured, and unstructured data | New data sources go live in hours, not weeks |

AI-Driven Transformation | Automates data cleaning, enrichment, and quality tiering (bronze, silver, gold) | Agentic tools make data AI-ready faster, without a separate preparation sprint |

AI Data Cataloging | Auto-generates and maintains metadata, classifications, and business context | AI-driven cataloging enriches metadata and business context, giving agents and models more accurate, trustworthy context to work with |

Schema Change Detection | Alerts teams instantly when upstream schemas change before pipelines break | Eliminates silent failures that delay feature releases |

Lineage and Observability | Maps data flow end-to-end across all systems in real time | Engineers debug in minutes, not days; compliance sign-off is faster |

AI-Ready Lakehouse | Unified storage with built-in ML capabilities from day one | No separate data prep layer before model training or feature development |

AI App Development | Build AI-powered applications directly from prepared data inside the platform | Removes the gap between data work and product delivery |

Conversational Analytics | Natural language querying for all users, not just engineers | Product and business teams can validate features without waiting on data team bandwidth |

Workflow Automation | Data-triggered workflows across hundreds of app integrations | Reduces manual coordination between teams during release cycles |

Why the table above matters beyond the feature list

Each row represents a place where teams using fragmented stacks lose time. A custom connector build is not just a one-time cost. It requires documentation, maintenance, and debugging every time the source system changes. An undocumented dataset is not just inconvenient. It means every new engineer who touches it has to reverse-engineer what it means before they can use it safely in a feature.

DataGOL does not just automate these tasks. It removes the category of problem entirely by making each layer aware of the others. Schema change detection is useful on its own. But when it is connected to the same platform that runs your pipelines, your catalog, and your lineage, a detected change can trigger an alert, update the catalog entry, and flag affected downstream features in one action, rather than requiring manual coordination across three separate tools.

The compounding effect: Every layer that no longer requires a separate tool, a separate integration, or a separate team handoff shortens the path to a shipped feature. Across a full AI roadmap, that compression adds up to months recovered per year.

Why Unified Architecture Beats Stitching Tools Together

The fragmented stack is not a bad idea that failed. It is a reasonable idea that worked at a smaller scale and then became the bottleneck as AI ambitions grew. Here is what that transition looks like in practice.

Fragmented stack (point solutions stitched together):

Each tool requires its own integration, maintenance, and upgrade cycle

Governance policies must be replicated across ingestion, storage, and application layers

Pipeline failures are hard to trace because observability is split across systems

Every new AI use case restarts the integration work from scratch

Human coordination between teams fills the gaps between tools, creating "human glue" that is slow and error-prone

Platform costs scale with the number of tools, not the value delivered

Unified platform (DataGOL):

One integration layer serves every downstream use case

Governance, lineage, and compliance are embedded once and inherited everywhere

End-to-end observability means failures surface immediately with full context

New AI features reuse the same prepared data foundation without additional setup

Coordination overhead drops because teams work inside one system with shared context

Infrastructure costs cut by 75% compared to maintaining a fragmented stack

The economic case is straightforward. Replacing fragmented tools and manual coordination with a single agentic system reduces both platform spend and the hidden cost of human glue. The speed case is equally clear: fewer moving parts means fewer things that can slow a release down.

What Faster AI Feature Shipping Looks Like in Practice

The architecture argument is only useful if it translates to real outcomes for real teams. Here is what changes after DataGOL is in place.

For engineering teams:

Pipeline development time drops significantly, freeing engineers to focus on feature work rather than data wiring and quality issues

Schema changes no longer cause silent production failures; automatic detection surfaces issues before they reach users

Custom connectors that previously took days to build can be developed in hours using DataGOL's AI-accelerated connector framework

No need to build a separate agent harness. Building one from scratch means wiring context management, tool routing, memory layers, and orchestration logic before a single agent runs reliably in production - work that typically consumes weeks of engineering time. DataGOL absorbs the harness entirely: because ingestion, cataloging, governance, and context enrichment are already handled by the platform, agents have verified, structured context from day one.

Engineers can embed agents directly and expose MCPs instantly, skipping the scaffolding and shipping the capability users will actually see.

For product and analytics teams:

Business analysts gain access to trusted, up-to-date data from across the organization for the first time, enabling them to create reliable dashboards and validate AI features faster

Operational teams can make decisions with confidence, with fewer data-related disputes and escalations slowing down iteration

For executives:

A faster path to becoming AI-native, with production-ready features shipping in weeks rather than quarters - compressing the time it takes to expand TAM with AI-powered capabilities

Reduced churn risk: teams that ship AI features faster retain customers who would otherwise move to competitors already offering those capabilities

Substantial cost savings in development and engineering overhead, with customers reporting 50-80% lower infrastructure costs compared to their previous stack

Observed outcome: Within one month of implementing DataGOL, one customer's data engineering team significantly reduced pipeline development time, gained real-time observability across all data flows, and enabled business analysts to produce cross-functional AI-powered insights that were previously impossible to generate.

Who Should Consider DataGOL First

If any of the outcomes in the section above sound out of reach with your current stack, that is a signal worth paying attention to.

You are likely a good fit if:

Your team is actively building AI features but losing weeks to data preparation, source integration, or pipeline maintenance before any model work starts

You are managing five or more data tools and feeling the coordination overhead between them

Your engineers are spending more time debugging pipelines and validating data than shipping features

You have brittle pipelines that break when upstream schemas change, and no automated way to catch those changes early

Your data catalog is incomplete or manually maintained, meaning engineers spend time on discovery that should go toward delivery

You are building agents but spending weeks on the harness - wiring context management, tool routing, memory, and orchestration - before a single agent runs in production

You want to reduce infrastructure spend while increasing AI delivery velocity, not add another tool to the stack

You are especially likely to see fast results if:

Your team wants to validate quickly before committing. DataGOL offers a 2-week proof of value engagement where you test the platform against your actual data and use cases - so you know exactly what changes before you sign anything.

Shipping AI Faster Starts Before the Model

If your AI features are shipping slowly, the most useful question to ask is not "how do we improve the model?" It is "how much time are we spending on the agent harness before the model even runs?"

For most SaaS engineering teams, the answer is uncomfortable. Weeks, sometimes months, are lost to building and maintaining the connectors, pipelines, schemas, catalogs, and governance wiring that sit between raw data and a working agent. None of that work is visible to users. All of it delays the features they are waiting for.

The short version of what DataGOL changes:

Ingestion, transformation, and governance happen once, not repeatedly for every new feature

Data is AI-ready from day one, not after a separate preparation phase

Every layer of the stack is connected, so a change in one place does not silently break another

No agent harness to build. The work that typically consumes weeks before a single agent runs in production - context management, tool routing, memory, orchestration, and MCP exposure - is absorbed by the platform. Engineers can embed agents directly and expose MCPs instantly, skipping the scaffolding and shipping the capability users will actually see

Teams spend their cycles on features, not plumbing

The fastest path to shipping AI features is not a better model or a bigger engineering team. It is removing the agent harness work that slows every feature down before it starts.

If your current stack is the bottleneck, contact the DataGOL team to discuss your AI roadmap. The 2-week proof of value is a low-risk way to find out exactly how much time you are leaving on the table.

Author

Vinod SP

Seasoned Data and Product leader with over 20 years of experience in launching and scaling global products for enterprises and SaaS start-ups. With a strong focus on Data Intelligence and Customer Experience platforms, driving innovation and growth in complex, high-impact environments